Jonathan Edwards

Senior Member (Voting Rights)

That seems very mysterious.

They used AI to prove that dead people were not conscious?

They used AI to prove that dead people were not conscious?

The history of myalgic encephalomyelitis, another infection-associated chronic condition and common phenotype of long COVID,7 illustrates this dynamic. Institutional researchers frequently conflate two distinct facets of myalgic encephalomyelitis: the symptom of fatigue and the pathophysiological state of post-exertional malaise. Post-exertional malaise is the often-delayed substantial worsening of symptoms following exertion that was tolerated before the illness onset, and can last days, weeks, or permanently. This conflation, and the subsequent failure to understand key features of myalgic encephalomyelitis, is the result of systematic exclusion of patient expertise.

Structural incentives are therefore needed to ensure institutional researchers engage patient expertise meaningfully throughout the research process. We believe that routine patient review of all manuscripts on long COVID, including but not limited to clinical trial protocols and results, pathophysiological research, and opinion pieces, can play this incentivising role.

Anticipation of patient scrutiny at the review stage creates a clear incentive to design and conduct studies that patients would deem methodologically sound and relevant, and meaningfully collaborate with patients throughout the research process. The risk of introducing bias would be low, as the primary purpose of patient review is to ensure the real-world applicability of research and faithfulness to lived experience, although we caution that the technical expertise of some patients should not be underestimated

Like they reviewed evidence supporting the use of 40 or so treatments for LC?A short comment by four authors associated with the Patient-Led Research group advocating that patients should routinely review all manuscripts on Long COVID. Mentions ME/CFS. A few quotes:

ScienceDirect Link

A massive seven-year project exploring 3,900 social-science papers has ended with a disturbing finding: researchers could replicate the results of only half of the studies that they tested

When some of SCORE’s team members attempted to reproduce the data analyses of 600 papers, they found that only 145 contained enough details to do so. And of these, only 53% could be reproduced so that results matched precisely

Finally, SCORE checked papers’ replicability — the most onerous of the three tasks. Researchers endeavoured to repeat entire experiments, gathering and analysing the data from scratch. Of the 164 studies that they focused on, they were able to replicate only 49% with statistical significance

This is just going to produce the same old issues of peer review and in some circumstances make things worse. They should have already noticed this problem with their own piece: Did they ask patients to review this piece and if so which ones? Only the ones who already share their opinion? It is a bit comedic that this was published behind a paywall, so not accessible to most patients.A short comment by four authors associated with the Patient-Led Research group advocating that patients should routinely review all manuscripts on Long COVID. Mentions ME/CFS. A few quotes:

ScienceDirect Link

Even far more disturbing is the huge number of studies and trials that duplicate the same mistakes. No one seems to think of that. I wrote the beginning of an article on that yesterday but I'm all out of juice dealing with some family health issues.There were three papers in Nature yesterday reporting on a seven year project that reviewed 3900 social science papers -

Investigating the replicability of the social and behavioural sciences

Investigating the reproducibility of the social and behavioural sciences

Investigating the analytical robustness of the social and behavioural sciences

There's a Nature News summary here:

Half of social-science studies fail replication test in years-long project

Oh, so this is even worse. This is how an industry ends up producing things so worthless that literature reviews find almost all of them to be so useless they can't even be used for anything, have to be rejected.When some of SCORE’s team members attempted to reproduce the data analyses of 600 papers, they found that only 145 contained enough details to do so. And of these, only 53% could be reproduced so that results matched precisely

Long-COVID patients demonstrated reduced subcortical volumes of left caudate and right thalamus (p<0.05), and increased cortical thickness across multiple PFC regions (left-rostral middle-frontal-cortex, left-caudal middle-frontal-cortex, and others; p <0.05) compared to controls. Left-rostral anterior-cingulate-cortex (lrACC) volume showed positive associations with HVLT-R-Immediate (R2=0.16, p<0.01) and Number Span Forward (R2=0.09, p <0.05)

Compared with COVID Controls, Long COVID participants showed reduced functional connectivity in the striato-thalamo-cerebellar circuit, specifically among right thalamus, bilateral putamen, and cerebellar regions IV-VII (ps<.001). Long COVID participants also had smaller structural volumes in these regions (ps <.01).

Subjective cognitive complaints following COVID-19 were not accompanied by measurable neuropsychological deficits but were linked to elevated affective symptoms and biological risk markers. Findings highlight a dissociation between perceived and objective cognition and suggest that inflammatory, genetic, and affective factors may shape self-perceived decline. Longitudinal studies are needed to determine whether these markers confer vulnerability to future cognitive decline.

20 CFS/ME patients and 20 HC were included; in-dependent samples t-test showed that while the groups did not differ in terms of baseline lactate levels (t=0.034,p=0.973), the ME/CFS patients had a significantly higher increase in lactate during stimulation compared to the baseline rest period, expressed as a % change of the rest value (t=2.847, p=0.007; mean (SD): ME/CFS 22.20 % (12.74), HC 10.55 % (13.14)). A change in comparator, NAA, did not differ between groups (t=0.85, p=0.932).

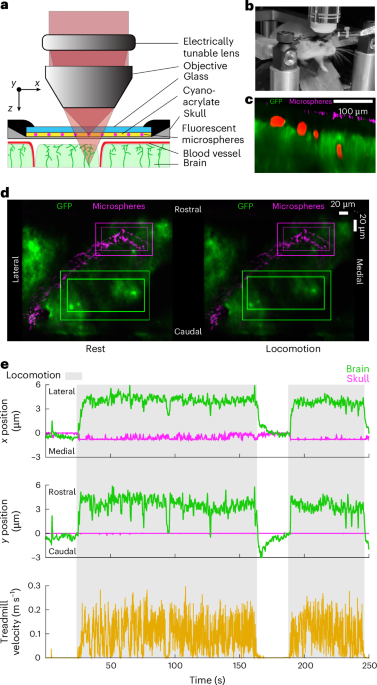

our simulations indicate that brain motion could cause fluid flows out of the brain, in the opposite direction of glymphatic flow during sleep, potentially explaining why the quiescence during sleep seems to be required to drive fluid flow through the glymphatic system

Mechanical coupling between the abdomen and the brain is especially interesting considering the functional mechanosensitive channels in CNS neurons and glia, as the forces that cause brain motion could also activate mechanosensitive channels in the brain. In addition to interoceptive pathways in the viscera, the direct signaling through mechanical forces to the brain may play a role in communicating internal states to the brain.

Chronic pain is associated with increased amounts of the stress hormone CRH in the brain, where it is difficult to target. He et al. found that CRH also promotes chronic neuropathic pain from the peripheral nervous system. . . [t]he findings suggest that CRHR2 antagonists that are being explored for other pathologies, such as heart failure, might also be effective for treating neuropathic pain.