Evergreen

Senior Member (Voting Rights)

I klnow where you're coming from, but I don't think the data we have to date support that position. It is an assumption.I think phase 3 proves that survey data is meaningless and step counts are the true revealer.

Survey data is only reliable if it correlates with step count data. In Ritux P3, it did not, in Dara, it did.

Again, self reported outcomes vs observed outcomes.

In the 2015 phase II study, the people who were thought to have responded particularly well to rituximab had step counts that are the stuff of dreams for most of us:

After 15–20 months follow-up, we had available Sensewear electronic armbands that continuously measured physical activity in the home setting. No data from baseline before intervention were available. The analyses were not preplanned, and were performed only in some patients (mainly in responders). They were performed in order to gain experience with the armbands for design of the protocol for the now ongoing randomized phase III-study. However, 12 out of 14 major responders in this study measured physical activity for 4–6 consecutive days in the time interval 15–20 months follow-up, with a mean value for “mean number of steps per 24h” 9829 (range 5794–18177), and a mean value for “maximum number of steps per

24h” 14623 (range 9310–23407).

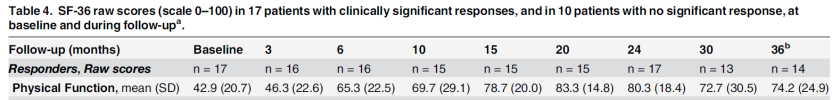

That step count sounds about right for people who are at about 80 on the SF36 PF scale (at 15-20 months when their step count was measured):