Evergreen

Senior Member (Voting Rights)

Oh it's great to have those numbers, thanks for sharing them @Trish. I felt hamstrung reading the other papers without SF36 PF scores.Gosh, I have started reading the paper and looking at the data.

This line of data stands out as a demonstration of the problems of the definiton of moderate and severe ME/CFS:

SF-36 Physical functioning, mean (standard deviation)

Severe ME/CFS 77.7 (18.9)

Moderate ME/CFS 90.4(11.1)

Long Covid 73.2 (21.5)

Mono controls 98.6 (3.9)

LC controls 93.6 (11.6)

Compare those figures with the PACE trial with means around 40 to 60 across the trial, and they were all able to attend clinics so would be classed on most severity scales as mild to moderate. To enter the PACE trial you had to score 65 or less.

What on earth is going on here?

Those SF36 PF scores are a lot more like those I would expect 6 months post-infection: the ME/CFS group meeting more than one set of criteria and the long COVID group would have mostly mild ME/CFS, with those one SD below the mean dipping into moderate.

The group fulfilling only one set of criteria's SF36PF is so close to that of LC controls that @Simon M's concern that that group contained people who were not ill looks very well-founded indeed.

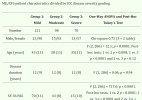

Here's what van Campen et al. 2020 found were the averages by severity in a cohort sick for an average 12 years (see last row, PAS=physical functioning scale):

Using the clinician-assigned ICC severity category, 121 (42%) were scored as having mild disease, 98 patients (34%) were scored as having moderate disease and 70 patients (24%) were scored as having severe disease.

Last edited: